Test Data Preparation Techniques

In the current era of revolutionary information and technology advancement, testers frequently consume a large amount of automated test data over the software testing life cycle. The testers not only collect and manage data from existing sources, but they also create massive amounts of automated test data to assure their quality booming contribution in the delivery of the product for real-world use.

As a result, testers must continue to research, learn, and apply the most effective methods for data collection, generation, maintenance, test automation, and comprehensive data management for all types of functional and non-functional testing.

Table of content:

- How to Create Test Data

- Test Data Preparation in Software Testing

- How to Prepare Test Data For Testing

- Test Data for the Performance Test Case

- What is the ideal test data?

- How to Prepare Data that will Ensure Wide Test Coverage?

- Data for Black Box Testing

- Properties Of A Good Test Data

- Test data preparation techniques

- Test Data Generation Approaches

- Test Data for White Box Testing

How to Create Test Data

Data can be created:

- Manually,

- Using data generation tools,

- It can be retrieved from the existing production environment.

The data set can be synthetic (dummy values), but it should ideally be representative (actual) data with good coverage of the test cases (for security reasons, this data should be disguised). This will result in the highest possible software quality, which is ultimately what we all desire. “With the smallest feasible data collection, the optimal test data identifies all application faults.” So be cautious when dealing with dummy data, such as that generated by a random name generator or a credit card number generator. These generators give you test data that poses no problems for the software you’re testing. Of course, you can utilize synthetic data to enrich and/or hide your test database (database testing).

How to Prepare Test Data For Testing?

Preparing data for testing is a time-consuming part of the software testing process. According to several studies, the tester spends 30-60% of his or her time searching for, managing, and generating data for testing and development. The following are the key causes for this:

- Testing teams don’t have access to the data sources

- Large volumes of data

- Delaying to give production data access to the testers by software developers

- Long refreshment times

- Data dependencies/combinations

Testing teams don’t have access to the data sources

Access to data sources is restricted, especially with GDPR, PCI, HIPAA, and other data security standards in effect. Consequently, only a small number of employees have access to the data sources. The benefit of this policy is that it reduces the risk of a data leak. The negative is that test teams are reliant on others, resulting in lengthy wait times.

Large volumes of data

Data collection from a production database is akin to looking for a needle in a haystack. You need specific circumstances to run decent tests, and they’re hard to come by when you’re digging through dozens of terabytes..

Delaying to give production data access to the testers by software developers

Agile isn’t adopted everywhere just yet. Multiple development teams and users work on the same project, and consequently on the same databases, at many businesses. Apart from causing conflicts, the data set frequently changes and does not have the correct (current) data when the next team is assigned to test the program.

Data dependencies/combinations

In order to be recognized, most data values are dependent on other data values. These dependencies make the situations far more complicated and time-consuming to prepare.

Long refreshment times

The majority of testing teams lack the ability to update the test database on their own. That implies they’ll need to go to the DBA and request a refreshment. Some teams will have to wait days, if not weeks, for this refresh to be completed.

How to Prepare Test Data For Testing

Test data management (TDM)

Since TDM is both complex and costly, some businesses cling to old behaviors. The test teams must accept the following:

- Data isn’t updated frequently (or at all);

- It does not include all of the data quality issues that occur during production.

- The data is responsible for a large number of bugs/faults in test cases.

That’s a shame, because it doesn’t have to be that complicated, and TDM pays for itself. Simple strategies can help you save a significant amount of time and money. It also ensures good testing and, as a result, high-quality software.

Test Data for the Performance Test Case

Performance tests necessitate a vast amount of data. Creating data manually may miss some small flaws that are only detected by using actual data generated by the application under test. If you need real-time data that you can’t get from a spreadsheet, ask your lead/manager to make it available from the live testing environment (production environment). This information will be useful in ensuring that the program runs smoothly for all valid inputs.

What is the ideal test data?

If all of the application errors can be recognized with the smallest data set size, data can be called to be perfect. Try to prepare data that includes all application functions while staying within the budget and time constraints for data preparation and testing.

How to Prepare Data that will ensure Wide Test Coverage?

Consider the following categories when designing your data:

- No data: Use blank or default data to run your test cases. Examine the error messages to see if they are appropriate.

- Valid data set: Create it to see if the application is performing as expected and that legitimate input data is being saved properly in the database or files (validity).

- Invalid data set: To test application behavior for negative field values and alphanumeric string inputs, create an incorrect data collection.

- Illegal data format: A single test data set with an illegitimate data format. Data in an invalid or illegal format should not be accepted by the system. Also, make sure that appropriate application error messages are generated.

- Boundary Condition dataset: Dataset with data that is out of range. Determine which application boundary situations exist and create a data set that includes both lower and upper boundary conditions.

- Performance, load testing, and stress testing dataset: This data set should be large. Creating different datasets for each test condition ensures that all test conditions are covered.

Data for Black Box Testing

Integration testing, system testing, and acceptance testing, sometimes known as black box testing, are all performed by Quality Assurance Testers. The testers in this technique of testing are not responsible for the internal structure, design, or code of the application under test. The primary goal of the testers is to find and fix mistakes. As a result, we use several black box testing approaches to perform functional or non-functional testing.

At this point, the testers require the test data as input in order to execute and deploy the black box testing procedures. Additionally, testers should provide data that will assess every application function while staying within the budget and schedule constraints.

Data set types such as

- no data,

- valid data,

- invalid data,

- boundary condition data,

- illegal data format,

- decision data table,

- equivalence partition,

- state transition data,

- use case data

Data sets can be used to design the data for our test cases. Before moving on to the data set categories, the testers begin collecting and analyzing data from the application under test (AUT). In line with the previous point about keeping your data warehouse up to date, you should define the data needs at the test-case level and label them as usable or non-reusable when scripting your test cases. It is beneficial to you if the data required for testing is well-defined and documented from the start, so you can refer to it afterwards.

Properties Of A Good Test Data

You must test the ‘Examination Results’ section of a university’s website as a tester. Assume that the entire application has been integrated and is in a state of ‘Ready for Testing.’ The modules ‘Registration,’ ‘Courses,’ and ‘Finance’ are all related to the ‘Examination Module.’

Assume you have sufficient knowledge of the application and have compiled a comprehensive list of test scenarios. These test cases must now be designed, documented, and executed. You must mention the allowed data as input for the test in the ‘Actions/Steps’ or ‘Test Inputs’ section of the test cases.

The data used in test cases must be carefully chosen. The correctness of the Test Case Document’s ‘Actual Results’ section is mostly determined by the test data. As a result, the actions taken to prepare the input test data are crucial.

Test Data Properties

The test data should be carefully chosen and have the following four key characteristics:

Realistic:

By realistic, we mean that the data should be correct in real-life settings. To test the ‘Age’ field, for example, all of the field values must be positive and 18 or above. It is self-evident that university admissions candidates are typically 18 years old (this might be defined differently in terms of business requirements).If testing is done with realistic test data, the app will be more robust because most potential flaws can be caught using realistic data. Another benefit of realistic data is its reusability, which allows us to save time and effort by not having to create new data every time.

I’d like to introduce you to the concept of the golden data set when we’re talking about realistic data. A golden data collection is one that includes nearly all of the possible scenarios that could arise in a genuine project. We can provide wide test coverage by employing the GDS. In my organization, I utilize the GDS for regression testing, which allows me to test all possible scenarios that could arise if the code is deployed, for instance a failure code.

There are many test data generator solutions on the market that examine the column characteristics and user definitions in the database and generate realistic test data for you based on this information. SQL Data Generator,DTM Data Generator, , and Mockaroo are a few notable examples of programs that produce data for database testing.

Practically valid:

This is comparable to but not the same as realistic. This attribute is more related to AUT’s business logic, for example, a value of 60 is reasonable in the age field but practically invalid for an applicant for a graduate or master’s program. An appropriate age range in this single scenario would be 18-25 years (this might be defined in requirements).

Versatile to cover scenarios:

In a single scenario, there may be several subsequent conditions, so choose the data wisely to cover as many aspects of the single scenario as possible with the smallest amount of data, e.g., when creating test data for the result module, don’t just consider the case of regular students who are successfully completing their program. Pay special attention to students who are repeating the same course but are in separate semesters or programs.

Test data preparation techniques

We have revised some of the most significant aspects of test data, as well as how critical test data selection is when performing database testing. Let’s talk about ‘test data preparation procedures’ now. Only two methodologies exist for preparing test data:

Method 1: Insert New Data

Create a new database and fill it with all of the data given in your test cases. Start executing your test cases and filling the ‘Pass/Fail’ columns by comparing the ‘Actual Output’ with the ‘Expected Output’ once you’ve supplied all of your required and desired data. Isn’t it straightforward? But hold on, it’s not that easy.

The following are a few fundamental and critical concerns:

- It’s possible that an empty database instance isn’t available.

- In some circumstances, such as performance and load testing, the test data inserted may be insufficient.

- Due to database table dependencies, inserting the required complete software test data into a blank DB is a difficult task. Data insertion can become a difficult operation for the tester due to this inescapable constraint.

- Insertion of limited test data (specifically tailored to the demands of the test case) may obscure some flaws that would otherwise be visible only with a big data collection.

- Complex queries and/or processes may be required for data input, and sufficient assistance or help from the database developer(s) would be required.

The five difficulties listed above are the most serious and visible downsides of this test data preparation technique. However, there are certain benefits as well:

- Since the DB only contains the essential data, TC execution becomes more efficient.

- Because only the data defined in test cases is present in the database, bug isolation takes no time.

- Testing and comparing results takes less time.

- Process of testing that is clear of clutter.

Method 2: Choose sample data subset from actual Database data

This is a more practical and feasible method for preparing test data. It does, however, necessitate strong technical abilities and a thorough understanding of DB Schema and SQL. You must duplicate and use production data in this method, substituting some field values with dummy values. Because it represents production data, this is the greatest sample data subset for your testing (sample size). However, due to data security and privacy concerns, this may not always be possible.

Takeaway: The techniques for preparing test data were addressed in the preceding section. In a nutshell, there are two methods: produce new data or select a subset from existing data. Both must be done in such a way that the chosen data covers a variety of test situations, including valid and invalid tests, performance tests, and null tests.

Let’s take a quick tour of data creation approaches in the last part. When we need to generate new data, these testing methods( methods of testing) come in handy.

Test Data Generation Approaches

There are 4 approaches to test data generation:

- Manual test data generation,

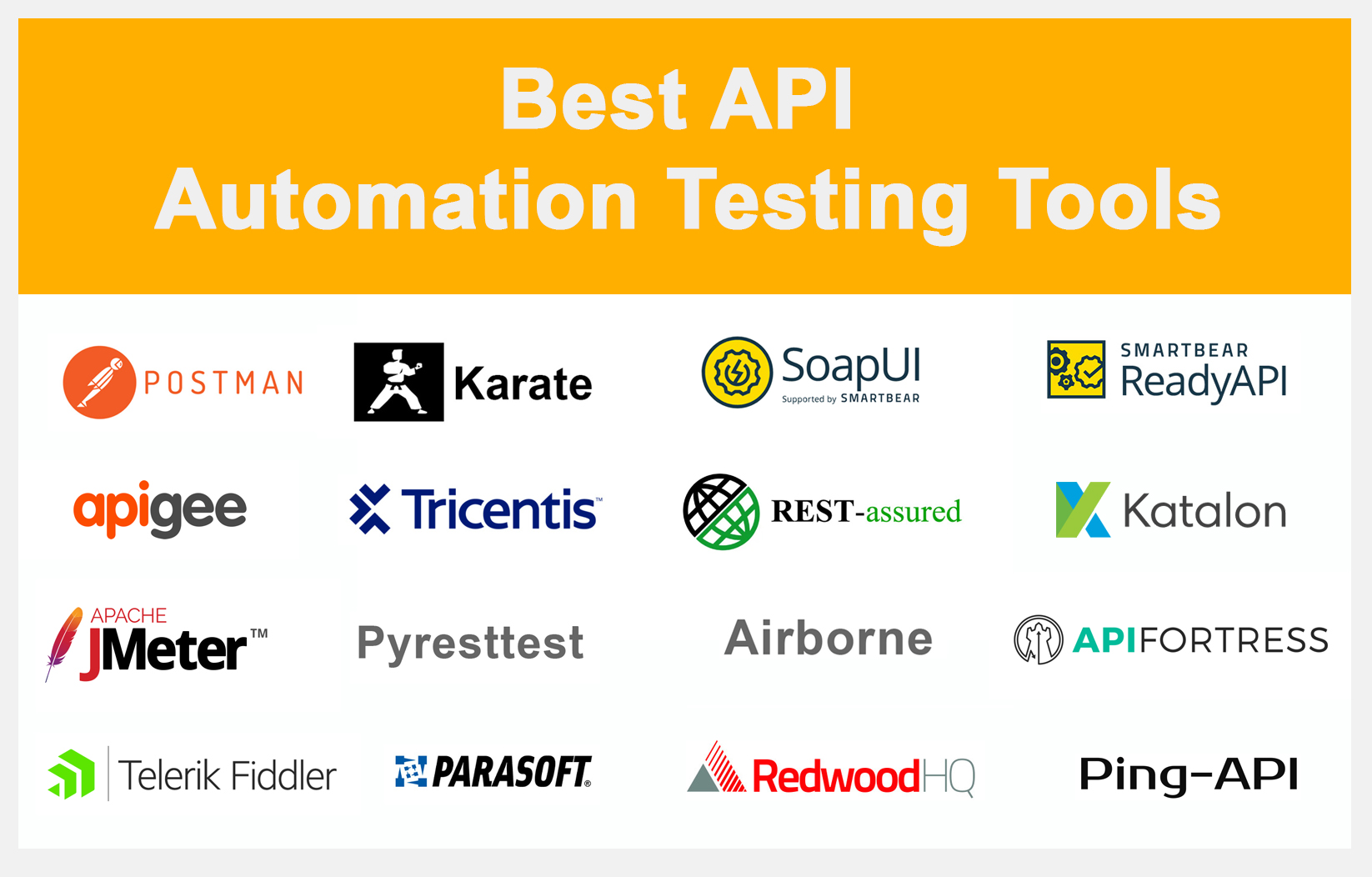

- Automation,

- Back-end data injection,

- Third-party testing tools.

| Manual Test data generation | Test data is manually entered by testers according to the test case criteria in this manner. It’s a time-consuming process that’s also prone to mistakes. |

| Automated Test Data generation | Data creation tools(data generation tools) are used to do this. The key benefit of this method is its speed and precision. However, it is more expensive than manually generating test data (manual test generation). |

| Back-end data injection | SQL queries are used to do this. This method can also be used to update data in the database. It is quick and efficient, but it must be implemented with care to avoid corrupting the existing database. |

| Using Third Party Tools | There are technologies on the market that can analyze your test scenarios/single scenario and then produce or inject data to offer comprehensive coverage. These tools are precise since they are tailored to the demands of the company. However, they are rather pricey. |

One of the testers’ primary roles is to create complete software test data in accordance with industry standards, legislation, and the project’s baseline papers.

Each methodology has its set of advantages and disadvantages. You should choose a method that meets your business and testing requirements. One of the testers’ primary roles is to create complete software test data in accordance with industry standards, legislation, and the project’s baseline papers.

Test Data for White Box Testing

Test data management is obtained from a close study of the code to be evaluated in white box testing. The following factors should be considered when selecting test data:

- Testing data can be prepared in such a way that all branches in the program source code are tested at least once.

- Path testing: all pathways in the source code of the program are checked at least once – test data can be prepared to cover as many scenarios as possible.

Negative API Testing:

- It’s possible that the testing data contains erroneous argument types that were used to invoke different functions.

- Invalid combinations of arguments may be used to call the program’s methods of testing data.

Additional Information on Test Data

- Performance testing is a type of testing that is used to examine how quickly a system responds to a given workload.

- Use Case Test Date: This is a testing approach for identifying our test cases that capture the end-to-end testing of a feature.

- Unit testing is a software development technique in which the smallest testable pieces of a program, referred to as units, are examined separately and independently for proper operation.

- The process of determining whether or not an information system safeguards data from harmful intent is known as security testing.

- Hypothesis testing is a statistical process in which an analyst verifies a hypothesis about a population parameter. Not only for data, but also for statistical procedures used for hypothesis testing, a good and adequate measure is critical.

- In inferential statistics, most predictions are for the future and generalizations about a population by studying a smaller sample size. Inferential statistics refers to the various methods in which statistics acquired from observations on samples from study populations can be utilized to determine if such populations are actually distinct.

- The two primary divisions of statistics, which is the science of data collection, analysis, presentation, and interpretation, are descriptive statistics and inferential statistics.

- Normal data is an underlying assumption in parametric testing, determining the normality of data is a requirement for many statistical tests.

- However, when the strength of the correlations weakens and/or the degree of alpha decreases, greater sample numbers will be required to establish statistical significance.

- There are many test data generator tools on the market that examine the column characteristics and user definitions in the database and generate realistic test data for you based on this information.

- Some tests of controls provide more convincing evidence of the operating effectiveness of controls than others.

- Making API requests using HEAD methods is actually a good technique to just check if a resource is available.

- Don’t rely on data generated by other testers or on data from standard production.

- It’s a plus if the data includes coverage for exceptional scenarios as well.

- Since OPTIONS requests are less tightly defined and used than the others, they’re a suitable candidate for testing for fatal API errors.

- A hypothesis is tested by measuring and studying a random sample of the population being studied.